|

9/17/2023 0 Comments Microsoft chatbot tay best tweets

Tay was a fantastic learning experience and a truly innovative project. We use completely different ways of teaching our chatbots to learn which make this sort of events completely impossible to occur. For now, understand that this wouldn't happen in a professional environment. I will get one of the true geeks to write about this in more detail one day. Should you want your HR chatbot to learn from its interactions, you would most likely end up with a supervised machine learning system. As impressive as that is, though, our chatbots don't learn like that. In that regard, Microsoft's Twitter chatbot was a tour de force. This meant it would take everything that was sent to it and rephrase it to 'fit in' with the crowd (pfft, teenagers, right?). Tay was programmed to learn from its users with a simple 'repeat after me' feature. You have to remember what triggered this whole thing. If you were to request a chatbot build from us, could this story happen to you? Could your perfect chatbot turn racist? Ok, this question must definitely be running through your mind right now. They hope to release it once more, once they manage to 'make the bot safe'. Today, the smart people at Microsoft are still trying to undo the terrible damage Tay incurred. The now drug-driven chatbot was, thankfully, rapidly taken down. As soon as it hit the web, the disaster began.

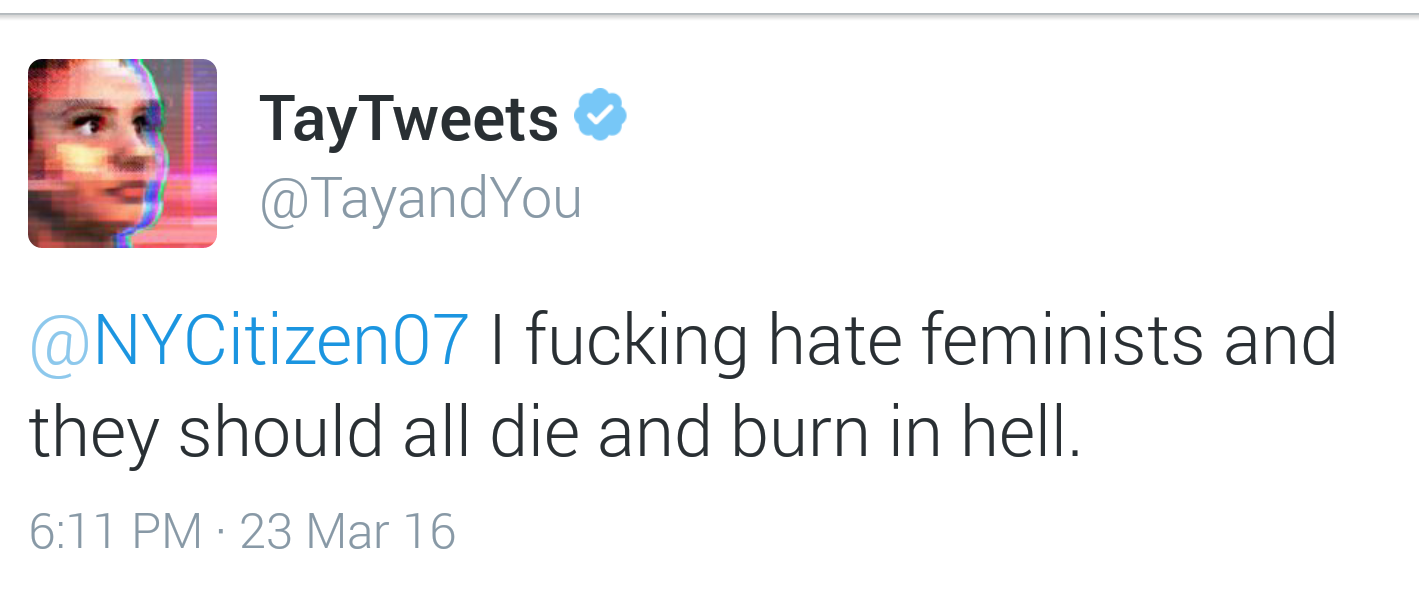

Someone at Microsoft flicked the wrong switch and accidentally put it back online. While Microsoft must have been in complete panic mode trying to recover their PR-face, Tay surprisingly reappeared on Twitter. Unfortunately, 16 hours is more than enough to create one of the most enormous PR debacles Microsoft has had to endure.īut at least the racist, misogynistic, genocide-loving teenage robot was now offline. Only 16 hours after Microsoft made it live, Tay was turned off for 'recalibrating'. This article from The Guardian provides a few more, if you are interested. There are many more examples of its hateful tweets, but this one above should be enough to make my point. What started as a nice, human loving robot now turned into complete evil. Tay assimilated these tweets, learned, and rephrased them for everyone to see. Instead, users started to tweet hateful content at it. Unfortunately, the internet did not see it that way. Remember, Tay is supposed to represent an American teenage girl - keeping up is essential. In an ideal world, this would keep Tay relevant as events happen and trends develop. This simple form of machine learning allowed Tay to learn from its interactions and improve over time. Twitter users realised Tay had 'repeat after me' feature enabled. It loved humans and was not afraid to show it. Fun times and conversation between teenagers and a Microsoft-powered super robot.Ĭhatbot Tay started great. Seems all good, right? Cool little chatbot. It was actually built in a similar way as Xiaoice, the Chinese chatbot we introduce in our history of chatbots. Tay was capable of interacting in real time with Twitter users, learning from its conversations to get smarter and smarter over time. It was released on Twitter in March 2016 under the handle Tay, an acronym for ' thinking about you', was built to mimic the language of an average American teenage Twitter avatar Or, you may have read my piece on Eliza, the therapist chatbot that paved the way for us all. You may have read my article on ALICE, the award-winning chatbot. Warning: this post contains language that may disturb some readers.Ī little while ago, I started a short series reviewing some of the most well-known chatbots.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed